By Matthew Millar R&D Scientist at ユニファ

This blog will look at how to build a Local Binary Pattern feature extractor for computer vision tasks.

Local Binary Pattern:

What is LBP

LBP is one of many feature extractors. HOG, SIFT, SURF, FAST, DoG, etc... are all similar but do slightly different things. What makes LBP different is, its main goal is to be used for a texture descriptor on a local level. This gives a local representation of any texture of an image. This is done by comparing a pixel with the surrounding pixels. For each pixel in an image, the surrounding x number of pixels will be looked at. X can be determined and adjusted as needed. The LBP value for every pixel is calculated to its neighbors. If the center pixel is greater than or equal it's neighbor's values, then it will be set to 1, else it will be set to 0.

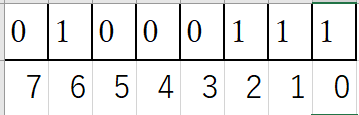

From the above talbe, you can see how each cell gets calculated. From this point this 2D array will be flattened to a 1D array like this:

This will give

So 71 will be in the output image. This process will be done for every single pixel in the image.

This talbe shows how each cell is calculated:

The basic idea behind this is to calculate each value of the 1D array at each index.

The value is determined by the position of the index in the array. If the value at the index is a 1, then value calculated to be where i is the index position. If the value at the index is 0, then the value is set to a 0 regardless of the index position. Then you sum the results of the whole 1D array to get the center pixel value.

To get the feature vectors from this, you have to calculate a histogram first.

This will be a histogram of 256 bins as the values of the LBP can range from 0 to 255.

Python Implementation:

OpenCV does have an LBP available, but it is meant for facial recognition and would not be appropriate for getting textures off clothing or environments. The use of the sklearn’s model can be very useful then for this project.

Let see how to implement it.

from skimage import feature from sklearn.svm import LinearSVC from sklearn.linear_model import LogisticRegression import numpy as np import cv2 import os class LBP: # Constructor # Needs the radius and number of points for the outer radius def __init__(self, numPoints, radius): self.numPoints = numPoints self.radius = radius # Compute the actual lbp def calculate_histogram(self, image, eps=1e-7): # Create a 2D array size of the input image lbp = feature.local_binary_pattern(image, self.numPoints, self.radius, method="uniform") # Make feature vector #Counts the number of time lbp prototypes appear (hist, _) = np.histogram(lbp.ravel(), bins= np.arange(0, self.numPoints + 3), range=(0, self.numPoints +2)) hist = hist.astype("float") hist /= (hist.sum() + eps) return hist # Create the lbp loc_bi_pattern = LBP(12,12) x_train = [] y_train = [] image_path = "LBPImages/" train_path = os.path.join(image_path, "train/") test_path = os.path.join(image_path, "test/") for folder in os.listdir(train_path): folder_path = os.path.join(train_path, folder) print(folder_path) for file in os.listdir(folder_path): image_file = os.path.join(folder_path, file) image = cv2.imread(image_file) image = cv2.resize(image,(300,300)) gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) hist = loc_bi_pattern.calculate_histogram(gray) # Add the data to the data list x_train.append(hist) # Add the label y_train.append(folder)

After then you can choose whichever model you want to train on. SVM I think would be best, but logistic regression or Naive Bayes could work also. It would be fun to play around with a few options to see which works best.

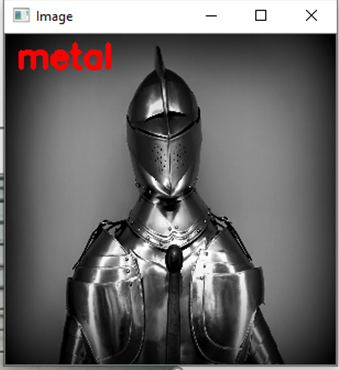

I trained and tested my code on images of metal and wood textures.

The results are pretty good for something so simple:

Pretty straight forward seeing we are using sklearn's implementation. All we really need to do is create the histograms to get out the feature vectors for each image. This can allow for you to then classify other images that have similar textures on them.

As you can see it works pretty well. The first “wood” image is actually metal siding, but I wanted to see how well it does one something that is very difficult to determine. This misclassification could be due to the overall image looking similar to that of wood flooring texture and not of metal textures. Even a human might have the same issue with this using a black and white photo.

Conclusion:

The ability to extract small scale or fine grain details makes LBP a very handy tool for computer vision tasks. But, one issue is that LBP cannot capture at different scales which causes it to miss out on a global scale features. This can be overcome by using different implementations of LBP which can handle different neighborhood sizes which allows for better control over the scale. Depending on your need the use of a fixed scale or changing one might change.

All royalty free texture photos were retrieved from here

https://www.pexels.com/