By Maimit Patel, Software Engineer at ユニファ

In this blog I am going to create SlackBot using AWS API Gateway, AWS Lambda and Slack Event API.

What is SlackBot?

A SlackBot is a regular app that is designed to interact with the user via conversation. When user mention the SlackBot app from anywhere in the slack then it will access the APIs and do all of the magical things that Slack App can do.

Let's get started

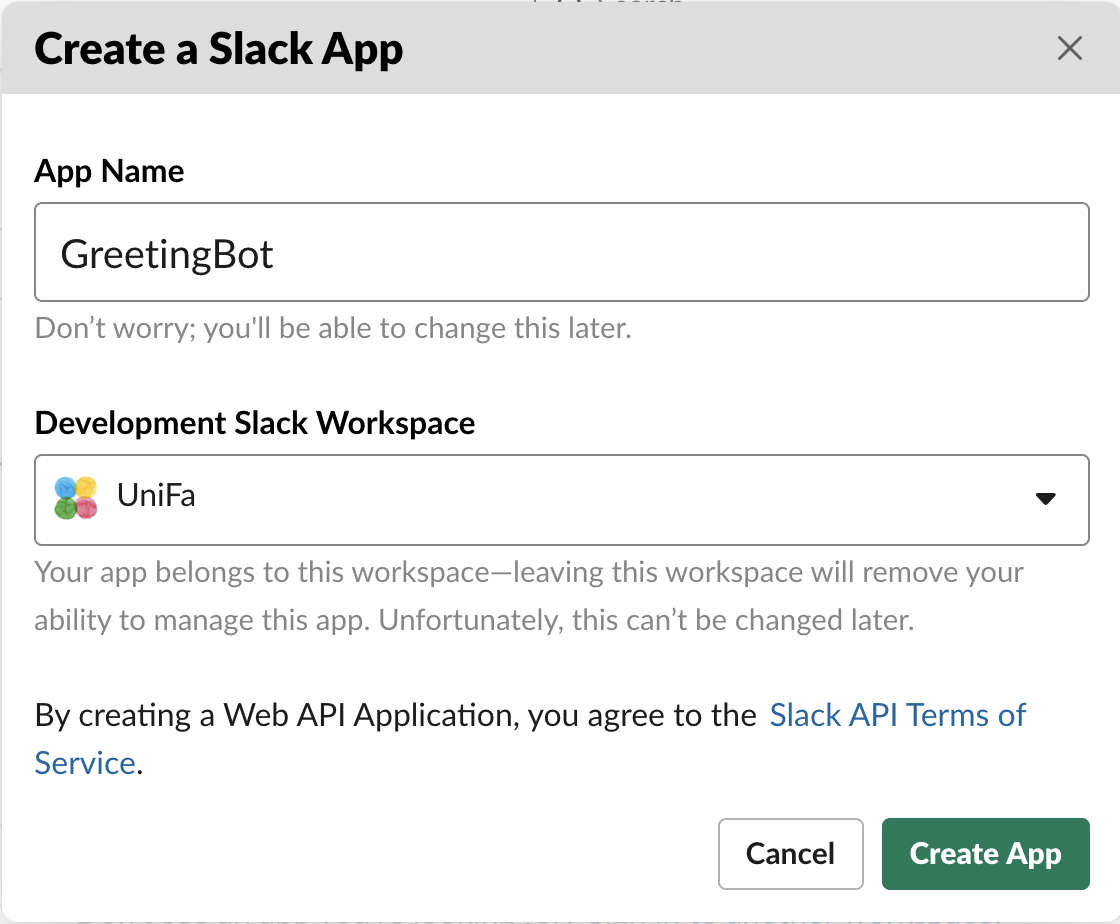

1. Setup Slack App

Go to the Slack apps home page and click

Create New Appbutton.

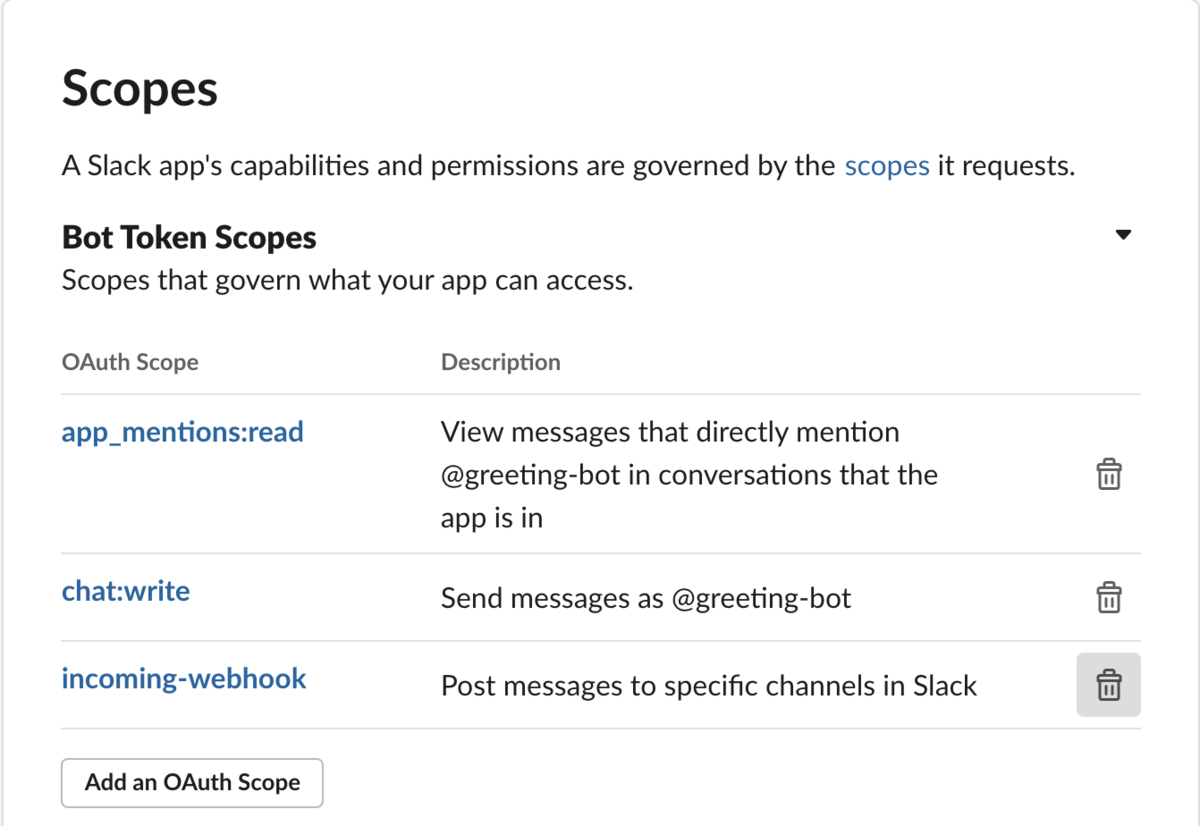

Create Slack App Add scopes under the

OAuth & Permissionsmenu

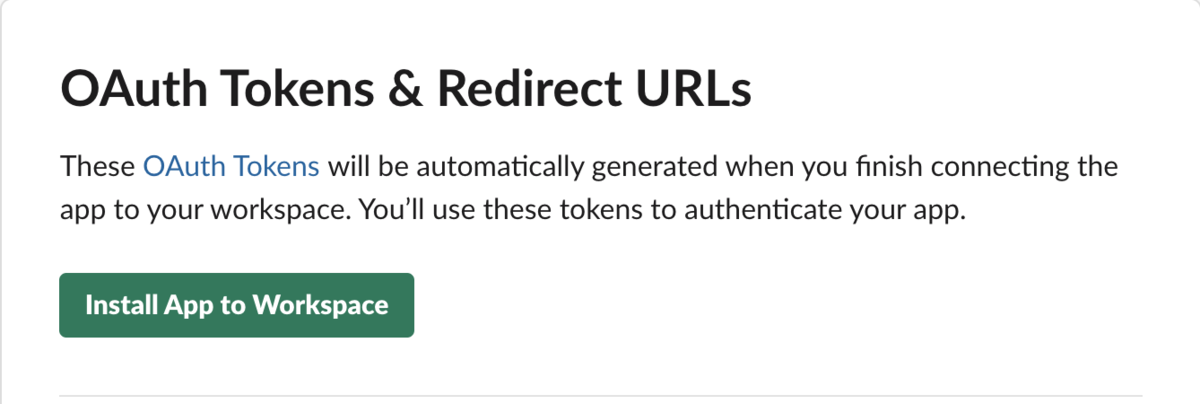

Bot scopes Installing App to Workspace

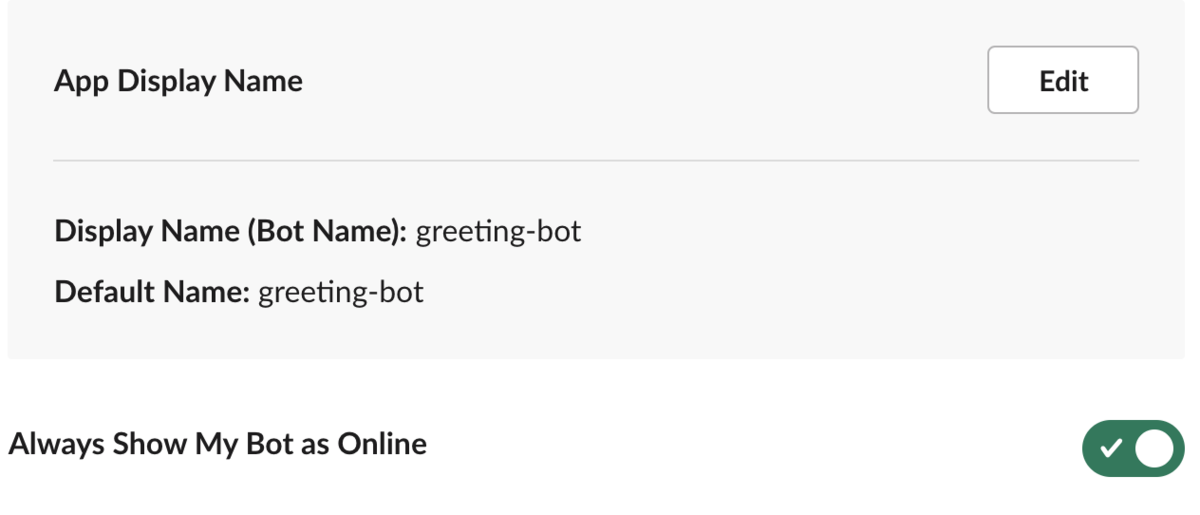

Install App to Workspace This will create Bot User, check it on

App Homepage

Bot User on the App Home

2. Configure Docker Image to run the Lambda function in the local environment

- Pull the lambci/lambda:ruby2.7 docker image

# Open command prompt in your local machine and # hit this command to pull the docker image of Lambda with runtime Ruby-2.7 $ docker pull lambci/lambda:ruby2.7

3. Create a Lambda function that responds to the Slack

- Setup application directory to store the Lambda ruby function

# Creating directory $ mkdir greeting-bot # Move to directory $ cd greeting-bot

- Create file greeting_bot.rb under greeting-bot directory

# Creating ruby file under greeting-bot directory $ touch greeting_bot.rb

Set environment variables

Find Bot User OAuth Access Token under the

OAuth & Permissionsmenu from Slack App$ export BOT_OAUTH_TOKEN='bot_oauth_token'Find Verification Token under

Basic Informationmenu from Slack App$ export VERIFICATION_TOKEN='verification_token'

Update the file greeting_bot.rb

class GreetingBot

def self.main(event:, context:)

new.run(event)

end

def run(event)

case event['type']

when 'url_verification'

verify(event['token'], event['challenge'])

when 'event_callback'

if event['event']['type'] == 'app_mention'

process(event['event']['text'], event['event']['channel'])

end

end

end

private

# Verify request from the slack

def verify(token, challenge)

if token == ENV['VERIFICATION_TOKEN']

{ body: { challenge: challenge } }

else

{ body: 'Invalid token' }

end

end

def process(text, channel)

body = if text.strip.downcase.include?('hello')

'Hi, How are you?'

else

'How may I help you?'

end

send_message(body, channel)

end

# Slack API response to the mentioned channel

def send_message(text, channel)

uri = URI('https://slack.com/api/chat.postMessage')

params = {

token: ENV['BOT_OAUTH_TOKEN'],

text: text,

channel: channel

}

uri.query = URI.encode_www_form(params)

Net::HTTP.get_response(uri)

end

end

- Run this Lambda function in the local environment and response back to the mentioned channel from request

# Make sure the mentioned channel(i.e greeting-channel) has already invited the greeting-bot that we have created in step 1

docker run \

-e BOT_OAUTH_TOKEN=$BOT_OAUTH_TOKEN \

--rm -v "$PWD":/var/task lambci/lambda:ruby2.7 \

greeting_bot.GreetingBot.main \

'{"type": "event_callback","event":{"type":"app_mention","text":"<@U>hello!","channel":"greeting-channel"}}'

- Result in local

4. Upload Lambda function to the AWS Lambda

Make sure you have created a Lambda function in AWS.

- How to create a Lambda function? read more

Upload Lambda function from the local machine using AWS CLI

# Creating zip file zip function.zip greeting_bot.rb # Upload zip file to the Lambda $ aws lambda update-function-code --function-name slack-greeting-bot --zip-file fileb://function.zip # add `--profile profile_name` if you got the AccessDeniedException

Set environment variables BOT_OAUTH_TOKEN and VERIFICATION_TOKEN in Lambda

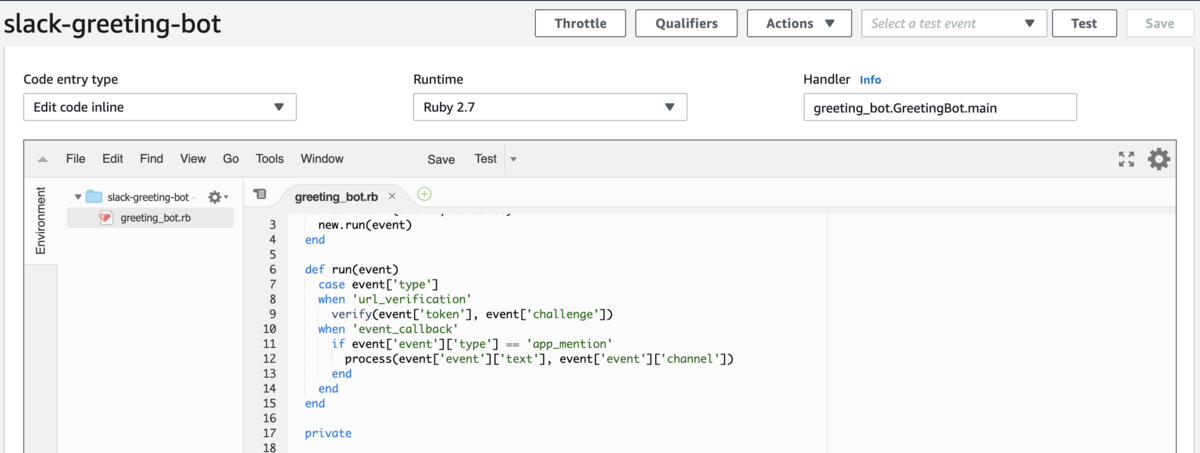

After upload to Lambda, it looks like this

Lambda Function

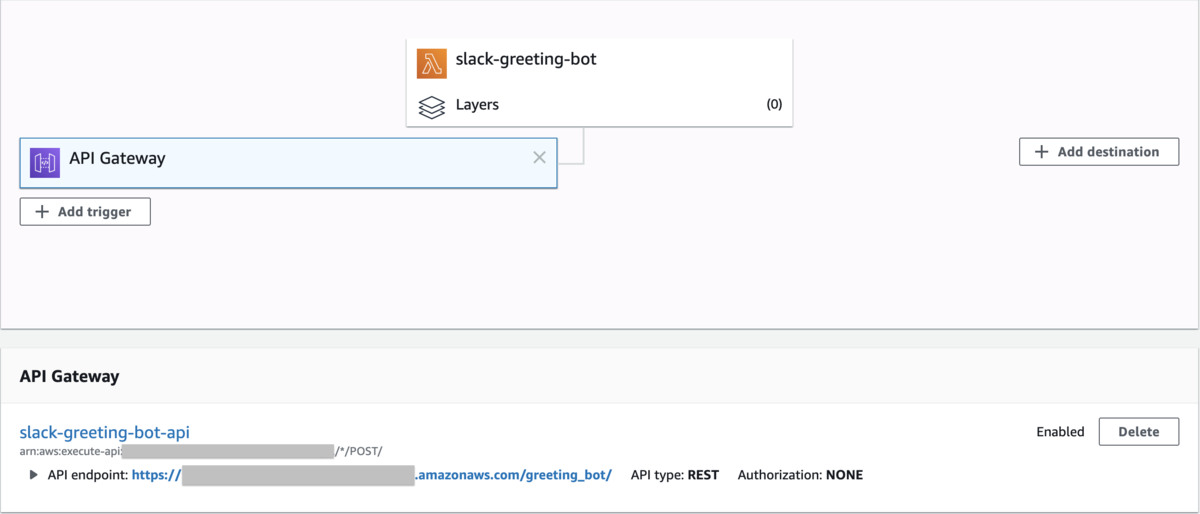

5. Create REST API end-point using API Gateway that calls the lambda function

I have created API Gateway

- How to create REST API using API Gateway? read more

Add trigger to Lambda function

- Click the

Add triggerbutton from the designer pane of the Lambda function - Select the trigger(i.e API Gateway) from options

- Select the REST API created in the

step 5

- Click the

6. Set the API Gateway end-point to Slack Event Request URL

- Go to

Event Subscriptionsmenu from the Slack App and enable the event subscription - Enter and Verify the Request URL on same page

- Request URL is API Gateway end-point that we have done in

step 5

Enable event subscription & Verify RESR API end-point

- Request URL is API Gateway end-point that we have done in

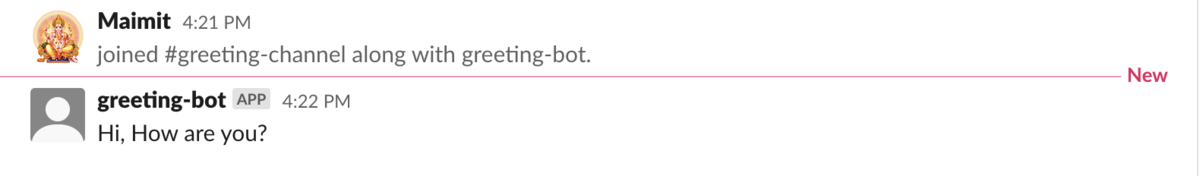

7. Test the SlackBot by calling from #greeting-channel